Global Support for India's AI Impact Summit Declaration

On February 21, 2026, the U.S., U.K., EU, and 85 other nations endorsed India's AI Impact Summit declaration. However, the agreement falls short of including binding safety commitments or enforcement measures, raising concerns about international AI collaboration.

AIGENERAL

2/23/20265 min read

88 countries endorse AI declaration at India summit with little binding commitment to safety

The world's major powers gathered in New Delhi and declared their commitment to responsible artificial intelligence development. But the agreement they signed avoids the hard questions about enforcement, accountability, and who decides what "responsible" actually means.

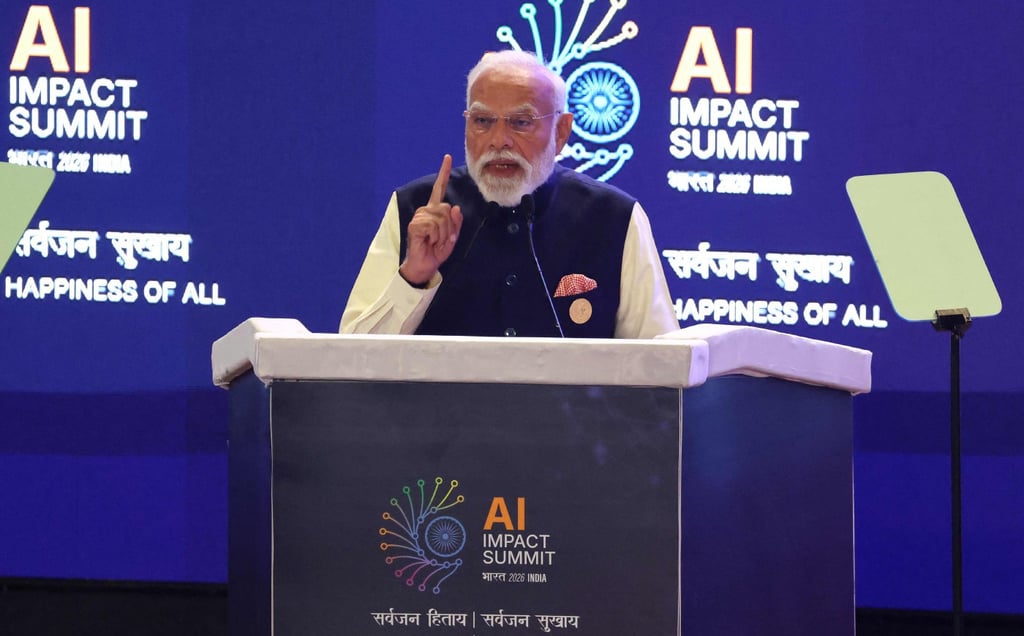

Some 88 countries and international organizations endorsed the AI Impact Summit Declaration on Saturday, February 21, 2026, following two days of meetings at Bharat Mandapam in New Delhi. The United States, United Kingdom, European Union member states, and nations from every continent signed the document recognizing "the importance of security" in AI development.

What they didn't sign was any binding commitment to actually keep AI systems safe.

A declaration heavy on principles, light on enforcement

The New Delhi Declaration opens with the Sanskrit principle "सर्वजन हिताय, सर्वजन सुखाय" (welfare for all, happiness of all), positioning AI's promise as best realized when benefits reach all of humanity. The document acknowledges that "the choices we make today will shape the AI-enabled world that future generations will inherit."

Noble sentiments. But the agreement includes no mechanisms for monitoring compliance, no penalties for violations, no standards for what constitutes "safe" AI, and no authority empowered to enforce any of its provisions.

According to reporting from POLITICO, the declaration "doesn't include any binding measures on how to keep the technology safe." Countries can sign the document, return home, and continue AI development programs with zero obligation to change anything they're currently doing.

This represents a significant retreat from what AI safety advocates hoped the summit would achieve. Organizations including the EFF, AI Now Institute, and various academic researchers had called for concrete commitments including mandatory safety testing for powerful AI systems, transparency requirements for training data and model capabilities, liability frameworks for AI-caused harms, and international cooperation on monitoring and enforcement.

None of that made it into the final declaration.

Why binding commitments remain politically impossible

The summit's failure to produce enforceable standards reflects fundamental tensions that have paralyzed international AI governance efforts:

Different countries have incompatible priorities. The United States views AI as critical for maintaining technological and military superiority over China. China sees AI as essential for economic development and social stability. The European Union prioritizes privacy and individual rights. Developing nations worry about being locked out of AI benefits while facing AI-driven disruptions to their economies.

No country wants to constrain its own AI development while competitors forge ahead unconstrained. This creates a classic prisoner's dilemma—every nation would benefit from global AI safety standards, but each nation has incentive to defect from those standards if doing so provides competitive advantage.

Technical challenges make enforcement nearly impossible. Unlike nuclear weapons programs that require visible enrichment facilities and testing, AI development happens in datacenters that look identical whether they're training safe systems or potentially dangerous ones. Monitoring compliance would require unprecedented intrusion into private sector computing infrastructure that governments and companies alike would resist.

The technology evolves faster than governance structures can adapt. International treaties typically require years of negotiation followed by domestic ratification processes. By the time binding AI agreements could take effect, the technology landscape may have shifted so dramatically that the agreements become irrelevant or counterproductive.

What the declaration does (and doesn't) accomplish

The New Delhi Declaration establishes several working groups focused on AI safety research, capacity building in developing countries, and best practices for responsible AI development. These initiatives could yield valuable coordination even without enforcement mechanisms.

The summit brought together political leaders and tech executives including (reportedly) Sundar Pichai from Google, Sam Altman from OpenAI, and Dario Amodei from Anthropic, alongside government officials from most major economies. These dialogues matter even when they don't produce binding treaties—personal relationships and shared understanding among key decision-makers can influence behavior even absent formal enforcement.

India's hosting of the summit reflects the country's ambition to position itself as an AI innovation hub. With over 100 million weekly ChatGPT users and massive investments including Reliance Industries' $110 billion AI infrastructure commitment announced during the summit, India seeks leadership in shaping global AI governance.

Prime Minister Narendra Modi emphasized in his February 19 opening address that "India cannot afford to rent intelligence," positioning AI development as a matter of digital sovereignty. Fellow speakers including French President Emmanuel Macron and UN Secretary-General António Guterres echoed themes of ensuring AI benefits reach all nations, not just those with advanced technology industries.

The enforcement gap will define AI's trajectory

The absence of binding commitments in the New Delhi Declaration highlights the central challenge facing global AI governance: powerful nations and companies will not voluntarily constrain themselves when they believe AI superiority provides strategic advantage.

This creates a race dynamic where countries and companies feel pressure to move fast even when moving carefully would produce better long-term outcomes. Microsoft AI CEO Mustafa Suleyman's recent prediction that AI will automate "most white-collar tasks" within 12-18 months reflects the industry's breakneck pace.

Some AI researchers argue binding agreements are unnecessary because market forces and liability laws will naturally incentivize safe AI development. Companies that deploy harmful systems will face lawsuits, reputation damage, and customer abandonment, creating self-regulating incentives toward safety.

Critics counter that by the time market forces correct for AI harms, catastrophic damage could already be done. They point to other technologies including social media, opioids, and financial derivatives where industry self-regulation proved inadequate to prevent massive societal costs.

What comes after New Delhi

The summit's organizers announced follow-up meetings scheduled for late 2026 and early 2027, with locations still to be determined. Whether those gatherings produce more concrete commitments depends partly on what happens over the next 6-12 months as AI capabilities continue advancing.

Several scenarios could shift the governance landscape:

If AI systems cause a high-profile disaster—algorithmic trading triggering financial crisis, autonomous vehicles causing mass casualties, or AI-generated content creating political chaos—public pressure could force governments to accept binding constraints they currently resist.

If one major power (likely the EU given its regulatory activism) implements stringent AI rules that prove workable and effective, other countries might follow the proven model rather than negotiating fresh international agreements.

If AI progress slows or plateaus, reducing perceived urgency around transformative AI risks, the window for binding agreements could close as political attention shifts elsewhere.

If AI capabilities advance so rapidly that alignment and control become obviously urgent problems, even competitive nations might recognize that cooperation serves everyone's interests better than unilateral races to deploy increasingly powerful systems.

The declaration's real message

The New Delhi Declaration sends a clear signal about the state of international AI governance in 2026: countries recognize AI as important and potentially risky, but not important or risky enough to accept constraints on their own development programs.

This represents honest diplomacy rather than failure. The summit produced an agreement that participating nations could actually sign rather than aspirational language that would have been dead on arrival in domestic political processes.

But the gap between what AI safety advocates hoped for and what the declaration delivers highlights the fundamental challenge ahead. As AI systems grow more capable and more deeply integrated into economic and military infrastructure, the window for establishing international governance frameworks may close entirely.

Nations that invest hundreds of billions in AI infrastructure are unlikely to accept limits on returns from those investments. Companies racing to deploy AI products face competitive pressure to move fast even when moving carefully would be wiser. And the technology itself advances faster than governance structures can adapt.

The 88 countries that signed the New Delhi Declaration acknowledged these tensions without resolving them. Whether that honest acknowledgment represents the first step toward effective governance or merely diplomatic theater performing concern without commitment will become clear over the next 12-24 months.

For now, AI development continues at breakneck pace with declarations of good intent but no enforceable guardrails.